For the past two and a half years, we've been chasing an ambitious, maybe a little crazy, idea: what if you could feed an AI any study material: scribbled notes, a textbook chapter, a YouTube video, etc – and have it instantly build a personalized course for you? At Sizzle AI, Jerome Pesenti, a small, brilliant team, and I did just that.

We built it. It worked, and we grew a small but dedicated user base. And then, we hit the reality of the market, and struggled to scale as fast as we needed. Now, just a few months later, that setback has turned into the most exciting opportunity of my career: Sizzle was acquired by Campus.edu.

And so begins our next chapter.

This is the first in a series of articles meant to peel back the curtain on what we built at Sizzle, why we think it’s novel, and how it’s about to become the engine for our new, audacious goal at Campus: to become the leading institution in the world for AI in learning.

So, what is Sizzle?

At its core, Sizzle was born from a deceptively simple question: How do we actually learn? Not in the abstract, but in the messy, real-world context of a student cramming for a midterm or wrestling with a homework problem without support from a parent or tutor.

Most EdTech relies on static content. It can’t help you with your professor’s lecture notes or the particular quirks of your material. We wanted to build something dynamic, and with generative AI it is possible. Anyone can write an LLM prompt to help them study a topic, but to do it well, we had to go back to first principles, leaning heavily on established learning science. Two frameworks were our North Stars:

- The KLI Framework (Knowledge-Learning-Instruction): This is about the "what." It posits that any subject can be broken down into atomic, testable "Knowledge Components" or KCs. Think of these as the Lego blocks of knowledge. A single math problem might involve several KCs: identifying the formula, substituting variables, performing the calculation, etc. [Koedinger et al., 2012].

- The ICAP Framework (Interactive, Constructive, Active, Passive): This is about the "how." It argues that learning is most effective the more cognitive effort a student puts into the material. Memory is the residue of thought. Attending a lecture or reading notes (Passive Learning) is OK, actively answering questions to recall concepts is better (Active Learning). Writing or describing a concept is even better (Constructive). And interacting with other students (or an AI) to work through material is even better (Interactive). [Chi and Wylie, 2014].

Sizzle’s mission was to build a product that could perform this deconstruction on the fly. A user uploads their materials, and our system acts like a hyper-caffeinated and obsessive TA: breaking it all down into small KCs and then building a bespoke course of practice exercises, lessons, and quizzes designed to drive active and constructive learning. We have yet to crack Interactive Learning.

How We Built Sizzle

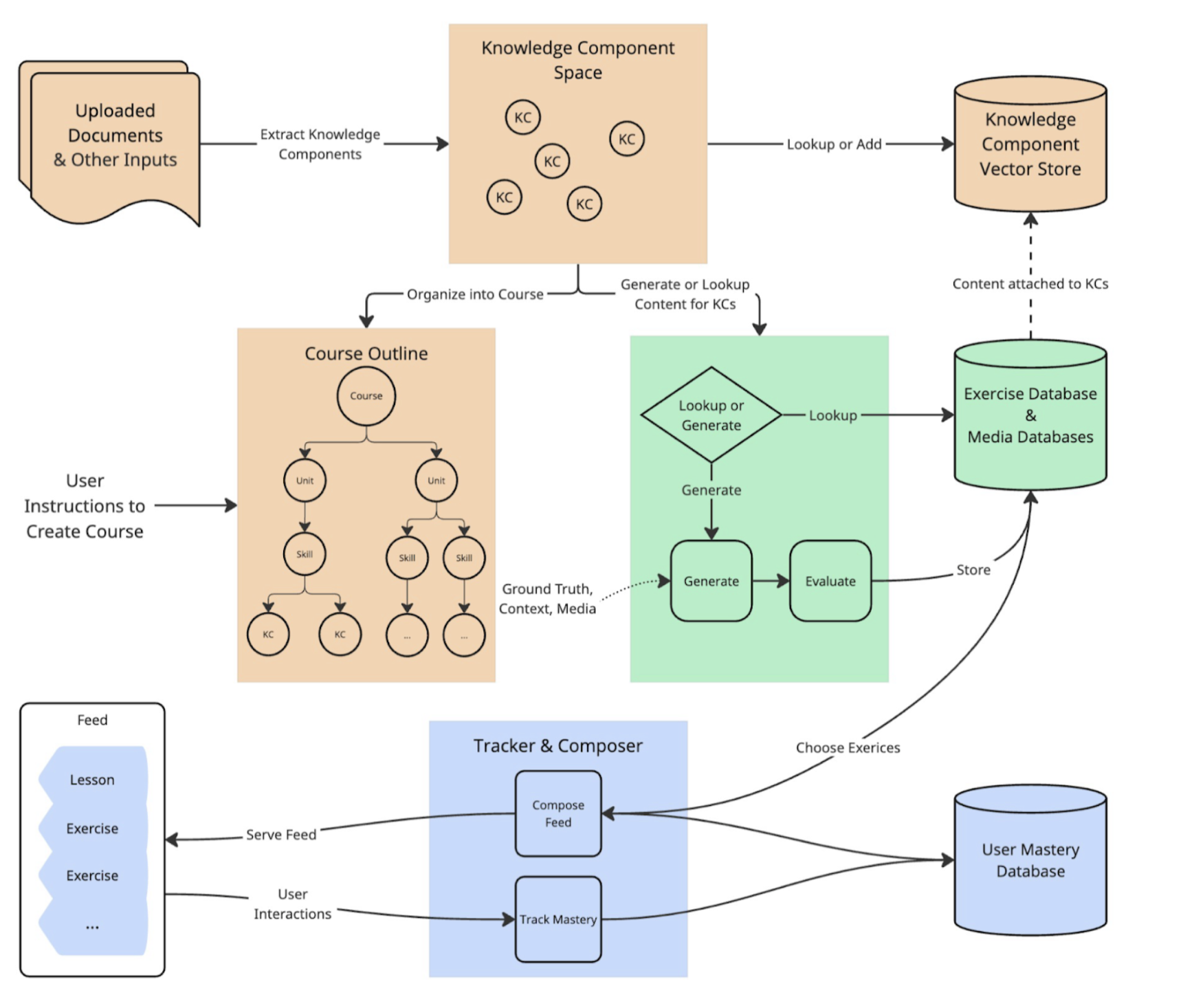

Saying you're "using AI for education" is easy. Actually building a system that can be shown to be effective, reliable, and grounded in evidence is brutally hard. Here’s a quick look at our engine's main components. We will continue to write additional blog posts to break down the details of this overall system diagram.

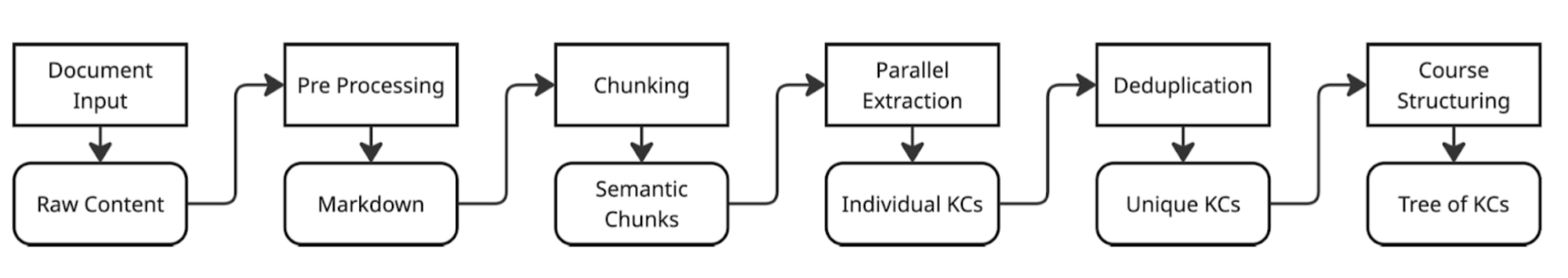

The Deconstructor: Extracting Knowledge Components

Our pipeline ingests any document, from a PDF to a video transcript. Using a battery of LLMs, we perform a bottom-up extraction, identifying every single KC. This is our foundational layer. By chunking the documents and processing them in parallel, we can generate a full curriculum graph in under 30 seconds.

The Content Factory: Generating Exercises

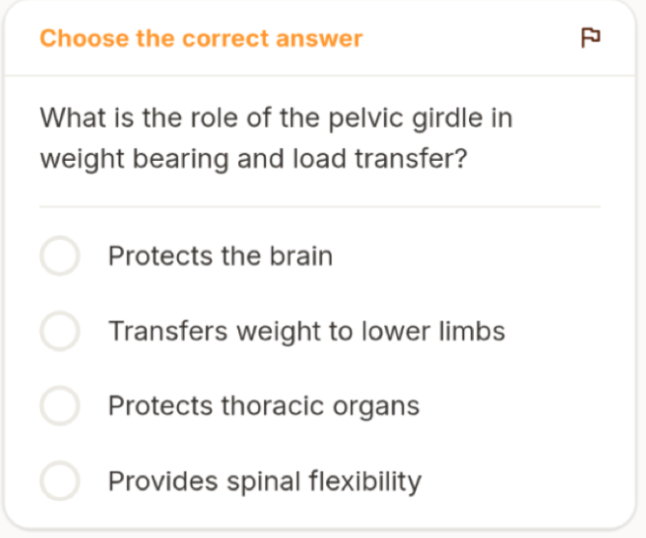

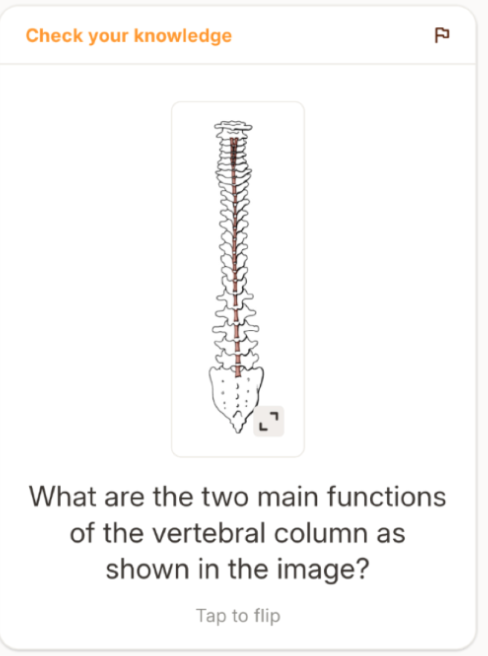

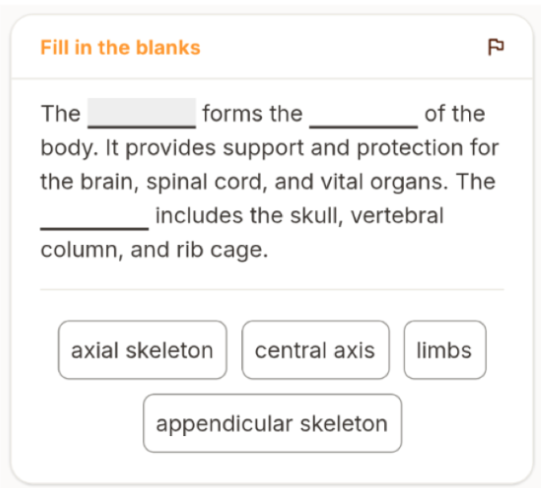

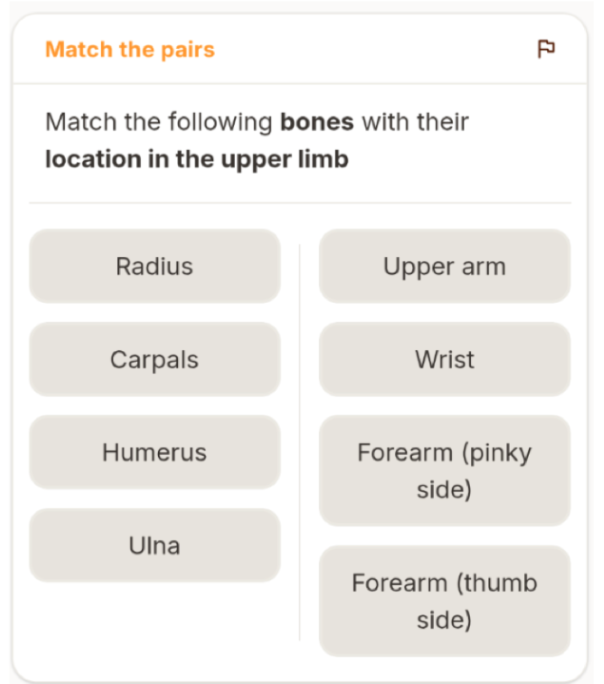

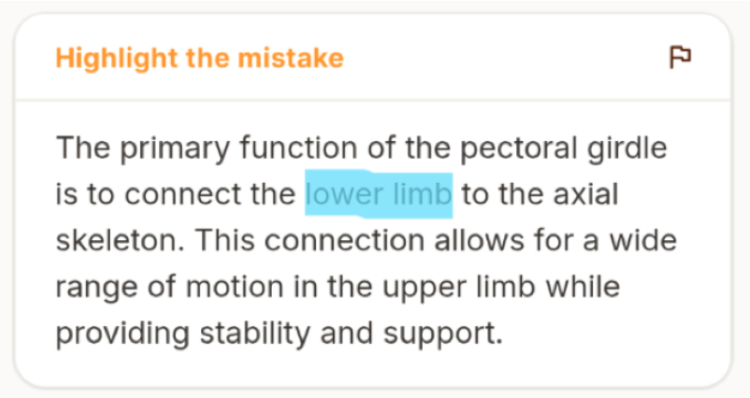

For each KC, we need high-quality practice material. We use a retrieval-augmented generation (RAG) system that grounds our LLMs with context from the user's documents or trusted external sources like Wikipedia. This keeps the content relevant and factually accurate. We generate 7 different types of exercises listed below:

The Quality Control: AI Judging AI

Let’s be honest: LLMs lie. Or, more charitably, they hallucinate. In education, that can be directly harmful to the student. To prevent this, we built a robust, multi-stage evaluation pipeline. Generated exercises are judged by a panel of other LLMs (an approach called PoLL) that act as a jury, scoring them on factual accuracy, relevance, and structural integrity. We validated this LLM jury against human AP teachers, and reached over 95% accuracy. The result is that we throw out about 36% of what our generators create. We’re not just building; we’re obsessively curating. We’ll go into more details on this step in a later article.

The Personalized Tutor: The Feed Composer.

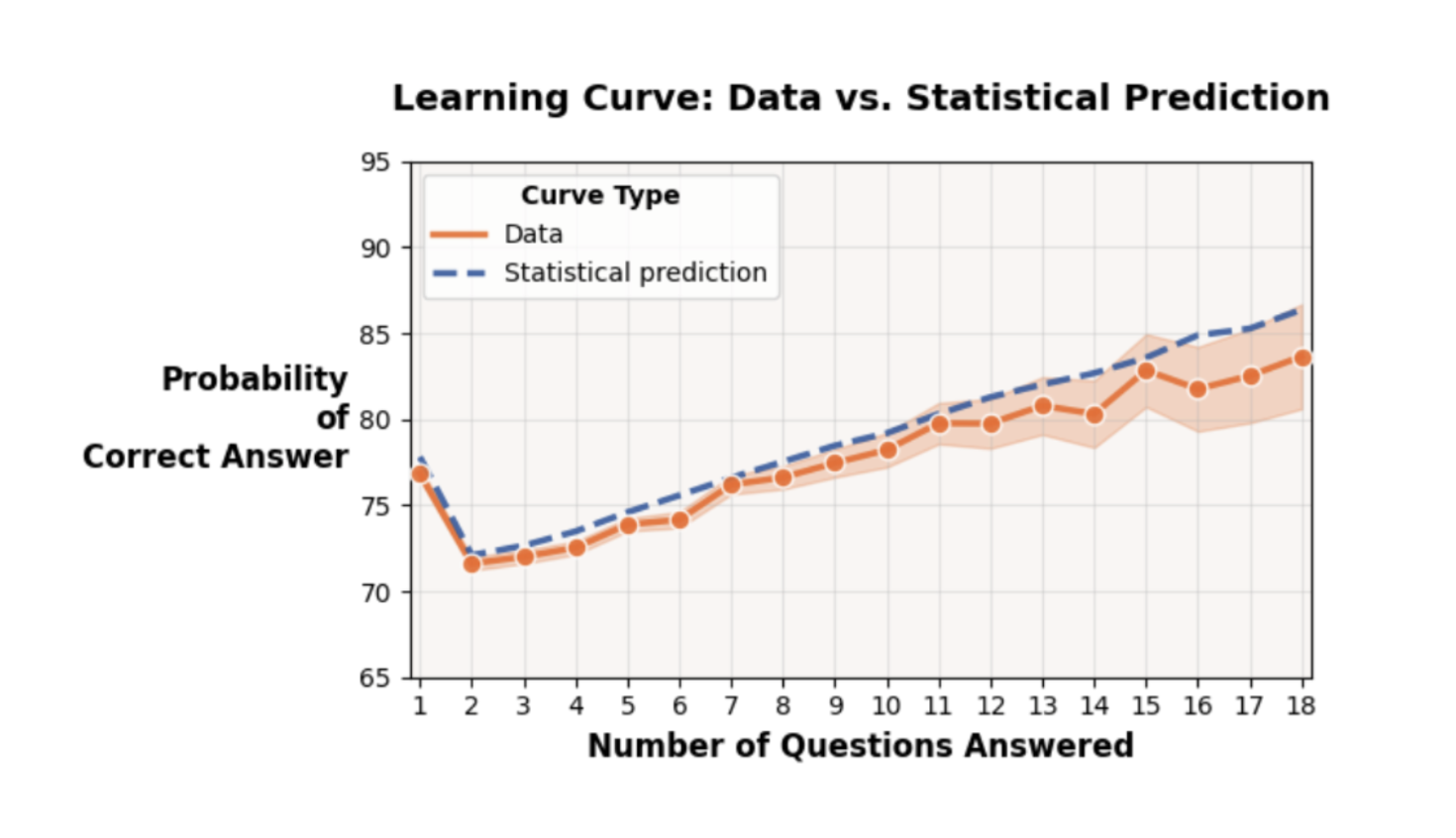

This is where it gets really interesting. How do you sequence exercises for optimal learning? We dug into the research and found an astonishing regularity in student learning rates [Koedinger et al., 2023]. The big takeaway: while students come in with wildly different initial knowledge, the rate at which they learn from practice is remarkably consistent. This means the biggest lever we have is opportunity. Our Feed Composer prioritizes exercises from the least-practiced KCs, ensuring balanced practice and implementing a natural form of spaced repetition. It also optimizes for semantic diversity to keep things from getting stale. We’ll go into more detail in a later article.

So that’s the architecture. But does it actually work? With over a million practice attempts from our users, we had enough data to ask some fundamental questions. We ended up reproducing one of the most fascinating findings in recent learning science: the astonishing regularity in student learning rate. The research shows that while students open the app with wildly different levels of prior knowledge, the rate at which their accuracy improves with each practice opportunity is remarkably consistent. This discovery became the empirical bedrock of our Feed Composer. It proves that the most powerful lever for mastery isn't some esoteric, hyper-complex personalization algorithm; it's simply ensuring every student gets enough focused practice on the right KCs. It's a beautifully simple and powerful principle.

The Next Chapter: Sizzle at Campus

And this brings us to now. Why is this acquisition so exciting? Because for the first time, we have a living laboratory.

At Sizzle, we were building for motivated students who might download up the app as a supplement, but we had no way to ensure they used it consistently, no way to measure how it impacted their actual grades or knowledge retention, and no way to get it in the hands of the students who might need it most. At Campus, we have it all: a thriving, human-centric college with dedicated success coaches, amazing professors, TAs, and most importantly, students we can learn from. The vision laid out by our founder, Tade Oyerinde, to combine best-in-class human instruction with a platform that adapts to each student, is exactly the environment we need to take this to the next level.

Our roadmap isn’t just about integrating Sizzle’s tech into Campus. It’s about answering the big questions:

- Can we better assess and tailor learning to the individual student by observing what KCs they know well and which they struggle with?

- Can we create feedback loops that give professors real-time, KC-level insights into where their class is struggling?

- Can we improve the in-class experience by promoting more interactivity and community?

- Can we automatically generate personalized "remedial loops" for students who fall behind, without them ever feeling singled out?

- Can we build a system that designs courses and assessments that are robust in a world where everyone is using AI?

This is our chance to run rigorous, state-of-the-art experiments at scale. To prove, with data, that complementing great teachers with truly intelligent AI leads to better outcomes. We plan to share and publish our results along the way.

Come Build With Us

The Sizzle journey was one of the most intellectually rewarding projects. But at Campus, the work feels different. It feels essential unlike anything in my tech career up until this point. We have the team, the resources, and a real-world environment to explore the frontier of AI in learning. The outcome is granting real students real degrees, which can change the trajectory of their lives. The ingredients are all here.

This isn’t just about another EdTech product. For us, this is our shot at making history. If that sounds like the kind of problem you want to work on, you should check out our careers page.

The next post in this series will go deeper into our KC extraction pipeline. Stay tuned.